The workflow¶

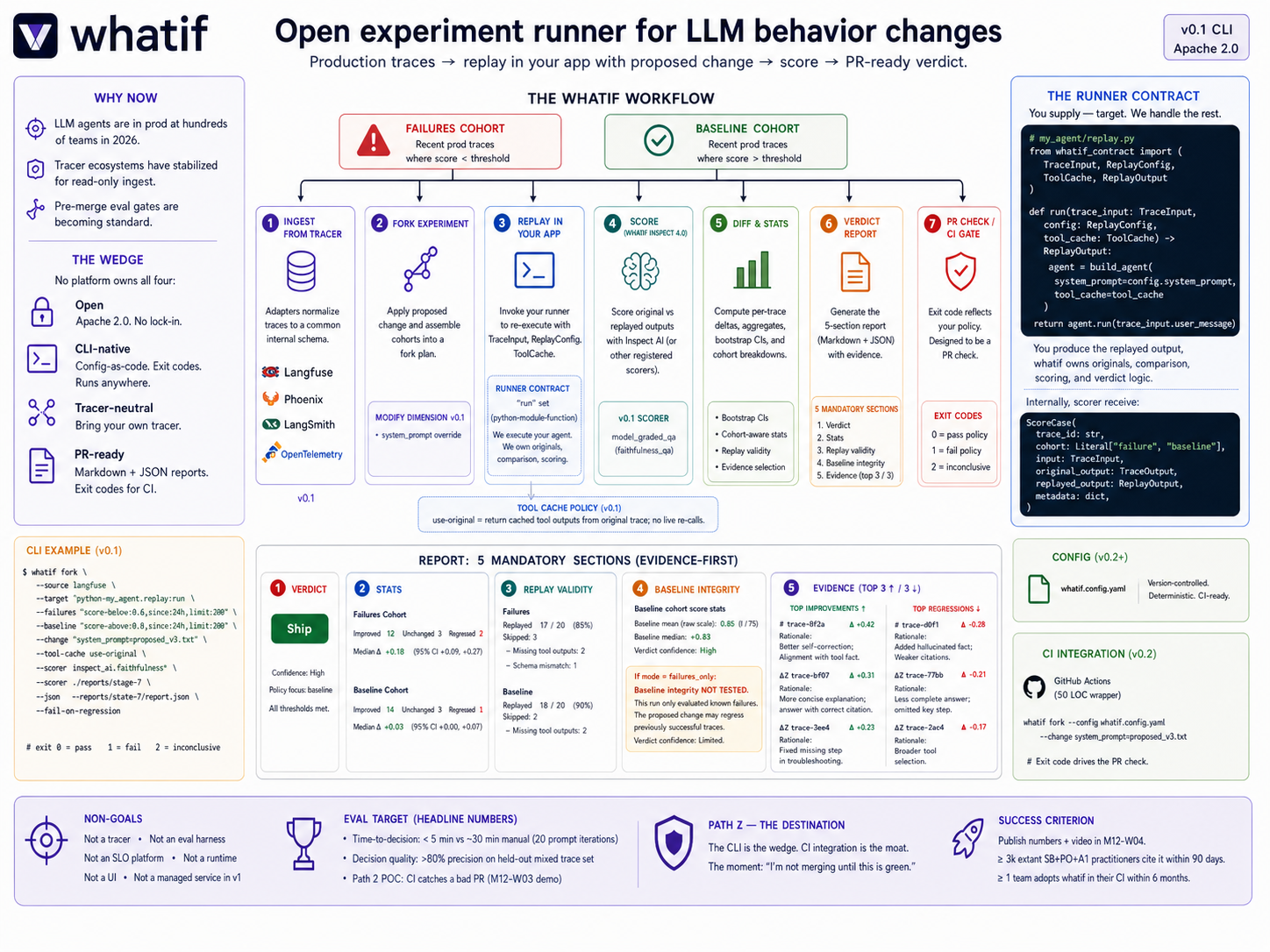

whatif runs a six-step loop that turns a proposed LLM change into a defensible decision.

The six steps¶

1. Observe production behavior¶

Inputs come from your tracer of choice: alerts, incidents, failed traces from Langfuse / LangSmith / Phoenix, plus an optional baseline of recently-successful traces.

In v0.1, baseline coverage runs by default-you can opt out with selection.mode: failures_only, but the verdict report will print a loud “Verdict confidence: limited” banner.

2. Define the experiment¶

whatif fork \

--source langfuse \

--target "python:my_agent.replay:run" \

--change system_prompt=prompts/v3.txt \

--tool-cache use-original \

--score inspect_ai:faithfulness

A change can be a new system prompt (v0.1), a model swap (v0.2), or a tool / parameter override (v0.3). Selection policy and scorer are pluggable.

3. Fork and replay¶

whatif selects matching traces from your source, applies the change, and replays each through your - -target`. Original tool outputs are reused from the cache so destructive side effects don’t re-fire.

A replay validation report is produced inline: how many traces were replayable, how many were skipped, and why.

4. Score and diff¶

Each trace produces an original and a replayed output. The scorer (Inspect AI by default) computes per-trace deltas. Aggregate stats include bootstrap confidence intervals and per-cohort breakdowns.

5. Evidence-backed verdict¶

The report has five mandatory sections: Verdict, Stats, Replay validity, Baseline integrity, Evidence. The Evidence section includes representative improvements and regressions with the judge’s rationale-numbers without rationale are not trustworthy enough to ship from.

6. Decide and ship¶

Markdown report for humans. JSON report for machines. Exit codes that reflect your declared decision policy:

Exit code |

Meaning |

|---|---|

|

Passed configured policy. |

|

Failed configured policy. |

|

Inconclusive (setup / replay / scoring failure). |

whatif enforces your declared policy. It does not certify “safety.”

Path Z-the same loop, in CI¶

The CLI is the wedge; CI integration is the moat. The same six steps wired into a GitHub Action become a pre-merge regression gate for LLM behavior. See Path Z.

What replaces the manual workflow¶

Before whatif, the loop was: copy traces → paste into a playground → manual scoring → eyeball the diff. Five fragmented tools, ~30 minutes per hypothesis, low decision quality.

After whatif: one CLI command, ~5 minutes per hypothesis, evidence-backed verdict. The same loop, made into a single coherent workflow.